Is Ubuntu still a Linux distribution*?

By on .

With the recent announcement that Ubuntu would be switching to Canonical's new Mir display server, Ubuntu is increasingly distancing itself from other Linux distributions on various levels. In order to approach the subject, we first need to define what a Linux distribution really is.

Most people agree that Android is not a Linux distribution. Sure, it may run the Linux kernel, but that is only secondary to the overall Android system. Most of its applications run in a Java virtual machine, and the system does a lot of things differently than most Linux distributions do (e.g. its graphics stack, input management, power management, software management, etc.). Most of these subsystems have drifted far apart from most Linux distributions, as a result of Android being targeted at mobile devices (low-power, touch-centric, etc). There is nothing inherently wrong with this approach, but it means that said subsystems cannot easily be incorporated back into regular Linux distributions, for various reasons. Some systems may not make sense in a non-Android environment (e.g. the Google Play Store), some of them make assumptions that a general-purpose distribution cannot ("there will be a touch screen"), some of them depend on other Android-specific code, etc. However, those systems can be used (and are being used) in Android distributions such as CyanogenMod or ParanoidAndroid.

Therefore, divergence of core software creates a new ecosystem, rather than contributing to the one it originated from. This distinction is important in order to give credence to the following definition of "Linux distribution":

*A Linux distribution is an operating system along with a set of software contributions which benefit the overall Linux software ecosystem.

By this definition, does Ubuntu still qualify as a Linux distribution? Let's find out.

Display server

This one is probably the easiest point to make. Canonical has announced that they will use Mir, a new display server that has been developed in-house since June 2012. The Mir specification document states that this move was required because the trusty old X display server is broken in countlessly many ways (which is true; Watch this talk if you are not convinced of this), because Weston (the reference Wayland compositor) doesn't fulfill all of their requirements (which is highly debatable), and because Android's SurfaceFlinger doesn't fulfill all of their requirements either. Thus, rather than researching the subject or contributing to the Wayland project, they decided to implement their own solution: Mir.

As a result, Ubuntu will be the only operating system to use the Mir display server. Given its poor reception, it is not likely that other distributions will eagerly adopt Mir any time soon. Things may change, but as it stands, Ubuntu (and possibly its derivatives) will be the only operating system using Mir for the foreseeable future. Mir will be a cause of fragmentation for the Linux graphics landscape, rather than a contribution to it.

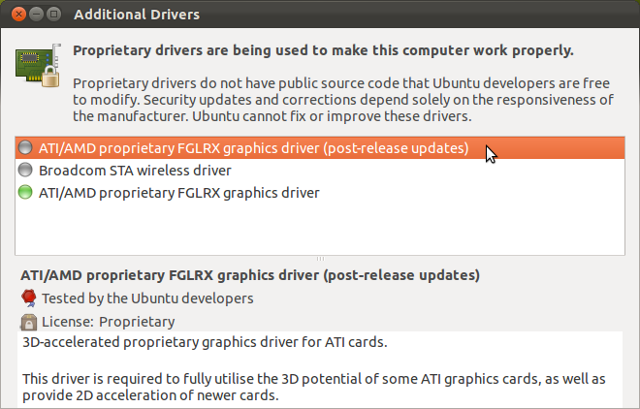

Graphics drivers

As a direct consequence of changing the display server to Mir, Ubuntu now requires its own set of proprietary graphics drivers. The current crop of proprietary graphics drivers only target X and cannot be used for Mir at this time; they would either need to be extended to support Mir or new drivers should be created altogether. Canonical claims it is currently speaking with Nvidia and other vendors in order to address this issue.

Therefore, if Ubuntu is the only operating system to use Mir, then it will also be the only operating system to use these new graphics drivers. Those drivers would be useless on distributions that are not using Mir. What's more concerning is that this moves attention and developer time away from writing drivers for Wayland or improving the existing ones for X. Splitting developer time means less-polished drivers for everyone. This is a lose-lose situation.

Init daemon

It has been the case for quite some time now that Ubuntu uses Upstart as init daemon. Currently, Google's Chromium OS is the only other Linux distribution using Upstart, so while Ubuntu is not the only operating system to use it, most other distributions have either updated to systemd or are still using SysVInit. It remains to be seen as to whether Chromium OS will switch to systemd as well; it started using Upstart months prior to the announcement of systemd. It was the best choice at the time to get the boot performance they were aiming for. Now, this may not be the case anymore. A lot of distributions, some of which used Upstart in the past, have moved on to systemd or will switch to it in their next release (Fedora, Mandriva and derivatives, Red Hat Entreprise Linux, Arch Linux and derivatives, openSUSE).

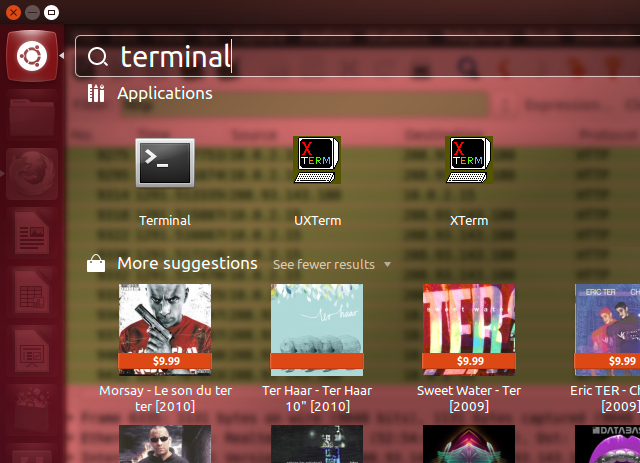

Desktop shell

Canonical has been hard at work on its in-house shell interface, Unity. It was rough around the edges at first, and has since then grown into a usable state. However, perhaps because Canonical tends to use it as a vehicle for controversial decisions or perhaps simply because it was not easy to package in its early days, adoption on other Linux distributions hasn't caught on despite being possible. Unfortunately, there are no usage statistics or non-anecdotal evidence to back up this assessment, but perhaps the fact that Ubuntu's most popular non-DE-oriented derivative, Linux Mint, decided not to use Unity and went as far as rolling their own instead, may indicate a lack of confidence from the community.

Software management

As Ubuntu is a Debian-based operating system, it inherits Debian's package management system through .deb packages and Apt repositories. So far, so good; this is very standard.

And then there's the Ubuntu Software Center. It is primarily a frontend to the Apt package management system, but it also includes the ability to offer commercial software. However, the Ubuntu Software Center isn't simply selling software; it is selling packages containing binaries made specifically for a single version of Ubuntu. Using those packages on Ubuntu versions other than the one it was purchased on, or on non-Ubuntu Linux distributions, is not supported. Similar to the way buying software on Apple's App Store doesn't give you access to the same software on non-Apple platforms, buying software on Ubuntu's Software Center doesn't give you access to the same software on non-Ubuntu platforms. This is different from other means of software delivery such as Steam or Desura which give you access to all available versions of the software, or even buying it directly from the author's website. This decision locks the user into the Ubuntu platform if they don't want to pay again to get the same software on a different platform.

Approach to software development

One's approach to software development hugely impacts whether or not the software created by said approach helps or hinders the ecosystem.

Of course, since Ubuntu is an operating system running the Linux kernel and various other GPL-licensed software, their contributions to said projects (which do exist!) are licensed under the GPL as well, facilitating their adoption in other Linux distributions. Everything about this is good.

But then there's Canonical's latest projects such as Unity, Ubuntu for phones and for tablets, and Mir. Those are examples of what Mark Shuttleworth refers to as "skunkworks":

Items with high "tada!" value that would be great candidates for folk who want to work on something that will get attention when unveiled.

Indeed, all of these projects have followed the same release pattern: Let it stew internally for a few months and, once it's at boiling point (i.e. when it's almost usable but too far in development for anyone to reasonably object to further development), unleash it to the public in a sudden manner. Effectively, this means that the project is secret, closed source, and out of the reach of public scrutiny and criticism for months on end. Then, once the figurative point of no return is reached, the project is unveiled, creating the aforementioned "high 'tada!' value". This marketing strategy may be effective at getting the attention from media outlets and the like, but it's just that: a marketing strategy. The problem with it is that it goes against the idea of a collaborative development approach, in which multiple developers from multiple backgrounds with different interests keep each other in check as development progresses. Without continuous exposure to critics, a sudden reveal means all of the (pent-up) criticism is suddenly unleashed all at once, hence the backslash over Mir. Not only that, it causes a huge amount of duplicated effort and additional fragmentation. Had Canonical expressed interest in working on a display server upfront, rather than only starting to hint at it more than half a year after development on it had started, the Wayland developers would have probably pointed them in the right direction, helped them with their experience with the Linux graphics stack (something which the current Mir developers seem not to have in spades), and hopefully keep development relatively close to the existing Wayland protocol (or conversely, adapted Wayland to follow Canonical's effort closely) such that no fragmentation would have been needed at all, or perhaps such that only one of the two projects would need to exist at all.

In a blog post about Mir, one of Canonical's Mir developers said:

In the case of Weston, the lack of a clearly defined driver model as well as the lack of a rigorous development process in terms of testing driven by a set of well-defined requirements gave us doubts whether it would help us to reach the “moon”. [emphasis added]

Read this a few times. What is it trying to say? It is saying that Weston was not a valid candidate because it didn't fit into Canonical's test-driven development model. There may be some truth to that, but is that enough of a reason to completely ignore an existing, promising, open solution, rather than simply add unit tests to it? It may be a lot of tedious work, but in the long run it would still be less work than the resulting cost of developing and maintaining a completely separate piece of software from scratch and fragmenting the ecosystem in the process.

He goes on to say:

We looked further and found Google’s SurfaceFlinger, a standalone compositor that fulfilled some but not all of our requirements. It benefits from its consistent driver model that is widely adopted and supported within the industry and it fulfills a clear set of requirements. It's rock-solid and stable, but we did not think that it would empower us to fulfill our mission of a tightly integrated user experience that scales across form-factors. [emphasis added]

The only criticism of SurfaceFlinger is that "it wouldn't empower [them] to fulfill [their] mission". What mission? "A tightly integrated user experience that scales across form-factors.". It's no secret that Android runs just fine on many screen sizes from tiny to small to medium to huge, so the "scales across form-factors" certainly isn't the cause here. We are left with:

We did not think that it would empower us to fulfill our mission of a tightly integrated user experience.

Canonical rejected SurfaceFlinger because it didn't empower them. Why? Because they couldn't control it enough to create that "tightly integrated user experience" they are after. They rejected SurfaceFlinger because it wasn't under their control. This speaks volumes.

Community communication

It's no secret that Canonical has been struggling with communication recently. Canonical's top representatves have had trouble getting their points across, such as the "Erm, we have root" post by Mark Shuttleworth, along with his ambiguous statements on "secret projects" which required having to post a follow-up article to clarify the matter. And then there's calling Stallman "childish", thankfully followed by an apology regarding this statement. There is definitely a message delivery problem here. Not only that, there's also been some disregard towards popular community requests by triaging them away.

And then there's the departure of prominent Ubuntu members from the community. Some of the comments on that article are quite interesting. Other members are feeling like their work is being left out. It is clear that something is definitely wrong with the Ubuntu community.

The recent outrage over Mir is only part of the problem, but it is a significant part of it. Not only does it make developers feel "pissed on", it also puts an additional burden on them to cool off the resulting FUD.

To be clear, such community discontent doesn't change whether or not Ubuntu should be considered a Linux distribution or not; however, Ubuntu's popularity and the size of its following are directly correlated with how far its new technologies will spread to the rest of the ecosystem. Low popularity breeds isolation.

Conclusion

As Ubuntu has grown, Canonical has tended to diverge from the rest of the Linux ecosystem and following its own path rather than trying to work with existing solutions, alienating part of its community in the process. This isn't news to anyone; people have been quick to notice that the word "Linux" hasn't been seen anywhere near the Ubuntu.com home page for quite a while now.

There is nothing wrong with differentiation. Differentiation is how distributions stand out from the crowd and how innovation happens: by doing its own thing and by doing it better than anyone else. However, differentiation isn't the same thing as siloing oneself away. Differentiation doesn't have to imply rupturing one's ties to their roots; siloing does.

Now may be a good time to reassess whether or not Ubuntu's divergence has arrived at a point where it is no longer appropriate to consider Ubuntu as part of the Linux software ecosystem, but rather as part of the Ubuntu software ecosystem.

Update: Jono Bacon responds to community concerns. Mark Shuttleworth also posted some blog posts in response to criticism. It is good to see them responding to the community's concerns.

Image acknowledgements: Starry Night, various official Linux distributions' logos, Ubuntu devices photo, Jockey screenshot from Ubuntu Vibes, Upstart Logo, Mark Shuttleworth photo by crshbndct, Ubuntu installfest 2011, Apple Inc. logo, Silo image.

Etienne Perot

Etienne Perot

Comments on "Is Ubuntu still a Linux distribution*?" (8)

#1 — by whatever

Yes. As long as there's a Linux kernel and a collection of software to go with it, it's a Linux distribution.

#2 — by Anonymous

This was a response on Reddit: Linux (or GNU/Linux) has traditionally been defined as an OS composed of the kernel, glibc, coreutils, and bash. So, according to the opinions of a 00s-era LUG, yes. Userland libraries, daemons, applications, and dev culture don't really factor in...

#3 — by Etienne Perot

@1, @2: I fully agree that, by that definition, Ubuntu is a Linux distribution and certainly will be for the foreseeable future.

This article is written under the context of another definition though, as described at the beginning of the article. This article isn't about trying to establish what the term "Linux distribution" really means; it is trying to establish that Ubuntu currently isn't contributing to the Linux software ecosystem as would commonly be expected from most Linux distributions.

#4 — by hatry

Good writing, I enjoy your writing style.

#5 — by Mark Shuttleworth

"A Linux distribution (often called distro for short) is a member of the family of Unix-like operating systems built on top of the Linux kernel. Such distributions are operating systems including a large collection of software applications such as word processors, spreadsheets, media players, and database applications. These operating systems consist of the Linux kernel and, usually, a set of libraries and utilities from the GNU Project, with graphics support from the X Window System."

Just thought I'd let you know this. Have fun with your unsatisfied standards, and your hipster attitude.

#6 — by Anonymous

I don't enjoy your writing style. This is hyperbole (protrolling) to the max.

#7 — by John Bergqvist

Technically it is a GNU/Linux Distribution, as it uses the Linux Kernel with the GNU libraries/utils etc. Yet I don't think Canonical really want to admit it. They're already calling the Linux Kernel the: "Ubuntu Kernel" now, and I can't see any reference to GNU or Linux (or Debian, for that matter) on their official website, not unless you dig really deep. So yes, in my opinion, Canonical is embarrassed about Linux and GNU, and I predict that in a few years time, they'll completely fork the kernel for themselves, or start their own kernel from scratch. IMO, I don't mind about Unity, and Ubuntu One, and Ubuntu stores, they're just bundled applications realy. What I do care about, is the fact that Canonical and Ubuntu need to embrace and acknowledge their roots, that Ubuntu Linux is a GNU/Linux Distribution which is based upon the Debian GNU/Linux Distribution, not run away from them, and disown them.

#8 — by fragger5

I don't actually give a damn so long as it WORKS